Pyspark filter

In the realm of big data processing, Pyspark filter has emerged as a powerful tool for data scientists, pyspark filter. It allows for distributed data processing, which is essential when dealing with large datasets. One common operation in data processing is filtering data based on certain conditions. PySpark DataFrame is a distributed collection of data organized into named columns.

Apache PySpark is a popular open-source distributed data processing engine built on top of the Apache Spark framework. One of the most common tasks when working with PySpark DataFrames is filtering rows based on certain conditions. The filter function is one of the most straightforward ways to filter rows in a PySpark DataFrame. It takes a boolean expression as an argument and returns a new DataFrame containing only the rows that satisfy the condition. It also takes a boolean expression as an argument and returns a new DataFrame containing only the rows that satisfy the condition. Make sure to use parentheses to separate different conditions, as it helps maintain the correct order of operations.

Pyspark filter

In this PySpark article, you will learn how to apply a filter on DataFrame columns of string, arrays, and struct types by using single and multiple conditions and also applying a filter using isin with PySpark Python Spark examples. Note: PySpark Column Functions provides several options that can be used with filter. Below is the syntax of the filter function. The condition could be an expression you wanted to filter. Use Column with the condition to filter the rows from DataFrame, using this you can express complex condition by referring column names using dfObject. Same example can also written as below. In order to use this first you need to import from pyspark. You can also filter DataFrame rows by using startswith , endswith and contains methods of Column class. If you have SQL background you must be familiar with like and rlike regex like , PySpark also provides similar methods in Column class to filter similar values using wildcard characters. You can use rlike to filter by checking values case insensitive.

Vectors Classification: Logistic Regression Alternatively, you can also use where function to filter the rows pyspark filter PySpark DataFrame.

The boolean expression that is evaluated to true if the value of this expression is contained by the evaluated values of the arguments. Skip to content. Change Language. Open In App. Solve Coding Problems.

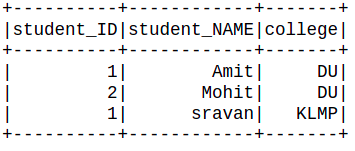

In this PySpark article, users would then know how to develop a filter on DataFrame columns of string, array, and struct types using single and multiple conditions, as well as how to implement a filter using isin using PySpark Python Spark examples. Wish to make a career in the world of PySpark? The filter function's syntax is shown below. The expression you wanted to filter would be condition. Let's start with a DataFrame before moving on to examples. Get ahead in your career with our PySpark Tutorial. To filter the rows from a DataFrame, use Column with the condition.

Pyspark filter

In this blog, we will discuss what is pyspark filter? In the era of big data, filtering and processing vast datasets efficiently is a critical skill for data engineers and data scientists. Apache Spark, a powerful framework for distributed data processing, offers the PySpark Filter operation as a versatile tool to selectively extract and manipulate data. In this article, we will explore PySpark Filter, delve into its capabilities, and provide various examples to help you master the art of data filtering with PySpark. PySpark Filter is a transformation operation that allows you to select a subset of rows from a DataFrame or Dataset based on specific conditions. By applying the PySpark Filter operation, you can focus on the data that meets your criteria, making it easier to derive meaningful insights and perform subsequent analysis. Ensure you have Apache Spark installed and the pyspark Python package. You can use functions from the pyspark. You can combine multiple conditions, use functions, and work with multiple columns to create complex filters. Here are more examples of DataFrame filtering in PySpark, showcasing a variety of scenarios and conditions:.

Space marine mods

ResourceProfileBuilder pyspark. Foundations of Machine Learning 2. MultiIndex pyspark. Missing Data Imputation Approaches 6. UDFRegistration pyspark. Campus Experiences. Generators in Python — How to lazily return values only when needed and save memory? Please Login to comment They are used interchangeably, and both of them essentially perform the same operation. DataFrameWriter pyspark. In PySpark, both filter and where functions are used to filter out data based on certain conditions. Matplotlib Subplots — How to create multiple plots in same figure in Python? Filter Pandas Dataframe with multiple conditions. Data Pre-processing and EDA Matrix Operations

Spark filter or where function filters the rows from DataFrame or Dataset based on the given one or multiple conditions.

SparkSession pyspark. View More. Generators in Python — How to lazily return values only when needed and save memory? Removing duplicate rows based on specific column in PySpark DataFrame. Changed in version 3. Eigenvectors and Eigenvalues How to reduce the memory size of Pandas Data frame 5. AccumulatorParam pyspark. How to formulate machine learning problem 2. Series pyspark. UnknownException pyspark.

It is remarkable, very useful message

The helpful information